NLP models and LLMs do not process raw text directly, but instead operate on numerical representations. In this context, tokenization is the process of converting a sequence of characters (a string) into a sequence of tokens, smaller units of text. These tokens are then mapped to numerical identifiers (integers), which correspond to positions in a vocabulary. This allows the model to operate on numbers rather than raw text.

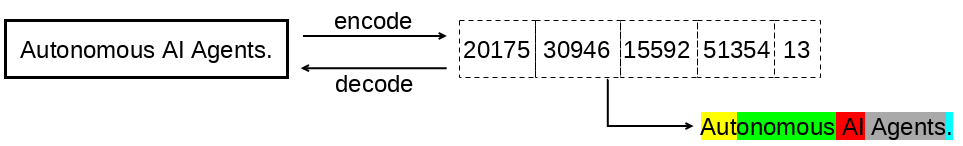

The image below illustrates the tokenization process, in which two main steps take place: encoding, responsible for transforming text into a sequence of integers, and decoding, which performs the inverse operation, converting this sequence back into the original text.

The tokenization shown in the image was generated using the Meta-Llama-3-8B model. Notice that whitespace is part of the tokens, as seen in ” AI” and ” Agents”. This means that “AI” and ” AI” are treated as distinct tokens, since the leading space completely changes their representation. Another important detail is that the “.” character is also treated as a token in the example text sequence. You can inspect the tokens directly in the model’s vocabulary by clicking here. This vocabulary is built during the tokenizer training process and consists of a limited set of tokens, each associated with a numerical identifier.

The goal of this post is to explain how the tokenization process works using the BPE algorithm. For this, I’ll cover the following topics:

- Types of tokenization

- Building a tokenizer using the BPE algorithm

- Vocabulary construction

- Results and analysis

Types of tokenization

- Character-based tokenization: is the simplest form of tokenization. Since a string is, by definition, a sequence of characters, each character can be treated as a unit and mapped to a numerical identifier. However, this approach has important limitations. By treating each character in isolation, the model loses awareness of larger structures, such as words and their meanings. In addition, token sequences become significantly longer, increasing computational cost and making it harder to learn more complex language patterns.

- Byte-based tokenization: assumes that a unicode string can be represented as a sequence of bytes, with values ranging from 0 to 255. This means that any text can be converted into this representation. Some characters are represented by a single byte (e.g., “A” → [65]), while others may span multiple bytes (e.g., “AI” → [65, 73] and “🤖” → [240, 159, 164, 150]). The main advantage of this approach is the fixed and compact vocabulary, limited to 256 possible values. However, similar to character-based tokenization, token sequences tend to be longer. This can increase computational cost, latency, and the risk of hitting the model’s context limit.

- Word-based tokenization: treats each word as a unit (token). The main challenge of this approach is vocabulary growth. Since new words constantly emerge, such as proper names, linguistic variations, and typos, it becomes difficult to efficiently limit the vocabulary size. In addition, words that were not seen during training are mapped to a special token, such as (unknown). As a result, different unseen words are represented in the same way, leading to information loss and potentially degrading model performance.

- Byte Pair Encoding (BPE): is an algorithm whose core idea is to iteratively identify the most frequent pairs of tokens in a dataset. Once identified, these pairs are replaced with a new token, which is then added to the vocabulary. The goal is to represent frequent character sequences as a single token, while less frequent sequences remain split across multiple tokens. In this way, BPE balances vocabulary size and sequence length, leading to a more efficient representation. To illustrate, consider the following example:

- Consider the word “banana”. Its byte representation is

[98, 97, 110, 97, 110, 97]. - The first step is to compute the frequency of adjacent token pairs and identify the most frequent one. The pair

"ba"appears once, while"an"and"na"appear twice each. Therefore, any of them could be selected. In this example, we choose"an". - Once the most frequent pair is identified, it is replaced with a new token. For instance, we can assign the value

256to represent this new token. Thus, the pair"an"is now represented by256. - Next, we replace all occurrences of the pair

"an"(represented by[97, 110]) in the original sequence. As a result, the sequence[98, 97, 110, 97, 110, 97]becomes[98, 256, 256, 97]. - As a result, the word “banana”, which was initially represented by a sequence of length 6, is now represented by a sequence of length 4.

- Consider the word “banana”. Its byte representation is

It is worth noting that, in addition to the tokenization types discussed above, there are other approaches.

Building a tokenizer using the BPE algorithm

The goal of this section is to demonstrate, using Python, how the BPE algorithm works in practice. The code below presents a Tokenizer class that implements this algorithm.

import json

from collections import Counter

class Tokenizer:

def __init__(self, vocabulary_size: int | None = None, min_frequency: int = 2):

self._vocabulary_size = vocabulary_size

self._min_frequency = min_frequency

def train(self, text: str):

tokens = list(text.encode("utf-8"))

use_vocab_limit = self._vocabulary_size is not None

max_merges = 0

if use_vocab_limit:

if self._vocabulary_size <= 256:

raise ValueError(

"Vocabulary size must be greater than 256."

)

max_merges = self._vocabulary_size - 256

if self._min_frequency < 2:

raise ValueError(

"Minimum frequency must be at least 2."

)

merges = {}

merge_index = 1

while True:

if use_vocab_limit and len(merges) >= max_merges:

break

if len(tokens) < 2:

break

pair, pair_count = self._get_most_frequent_pair(tokens)

if not use_vocab_limit and pair_count < self._min_frequency:

break

new_token = 255 + merge_index

tokens = self._apply_merge(tokens, pair, new_token)

merges[pair] = new_token

merge_index += 1

self._save_vocabulary(merges)

def encode(self, text: str) -> list[int]:

tokens = list(text.encode("utf-8"))

with open("merges.json", "r") as file:

data = json.load(file)

merges = {

tuple(pair): token

for pair, token in data["merges"]

}

while len(tokens) >= 2:

pairs = [pair for pair in zip(tokens, tokens[1:]) if pair in merges]

if not pairs:

break

pair = min(pairs, key=lambda pair: merges[pair])

new_token = merges[pair]

tokens = self._apply_merge(tokens, pair, new_token)

return tokens

def decode(self, tokens: list[int]) -> str:

with open("vocabulary.json", "r") as file:

data = json.load(file)

vocabulary = {

int(k): bytes(v["bytes"])

for k, v in data.items()

}

byte_sequence = b"".join(vocabulary[token] for token in tokens)

return byte_sequence.decode("utf-8", errors="replace")

def _get_most_frequent_pair(self, tokens: list[int]) -> tuple[int, int]:

pair_counter = Counter(zip(tokens, tokens[1:]))

pair, pair_count = pair_counter.most_common(1)[0]

return pair, pair_count

def _apply_merge(

self,

tokens: list[int],

most_frequent_pair: tuple[int, int],

new_token: int

) -> list[int]:

new_tokens = []

total_tokens = len(tokens)

i = 0

while i < total_tokens:

if (

i < total_tokens - 1 and

(tokens[i], tokens[i + 1]) == most_frequent_pair

):

new_tokens.append(new_token)

i += 2

else:

new_tokens.append(tokens[i])

i += 1

return new_tokens

def _save_vocabulary(self, merges: dict[tuple[int, int], int]):

vocabulary = {token: bytes([token]) for token in range(256)}

for (pair_0, pair_1), new_token in merges.items():

vocabulary[new_token] = vocabulary[pair_0] + vocabulary[pair_1]

vocabulary_view = {

str(k): {

"bytes": list(v),

"text": v.decode("latin-1")

}

for k, v in vocabulary.items()

}

merges_data = {

"merges": [

([a, b], token) for (a, b), token in merges.items()

]

}

with open("vocabulary.json", "w") as file:

json.dump(vocabulary_view, file, indent=2)

with open("merges.json", "w") as file:

json.dump(merges_data, file, indent=2)

Initially, the class constructor (__init__) takes two optional parameters: vocabulary_size and min_frequency. When vocabulary_size is defined, the algorithm performs merges until the desired vocabulary size is reached. In this case, the number of merges is limited based on this value. The min_frequency parameter defines the minimum frequency a token pair must have to be considered. When vocabulary_size is not provided, the algorithm continues running as long as there are pairs with a frequency greater than or equal to this threshold.

Before explaining the main methods (train, encode, and decode), it is important to understand some helper methods used throughout the process:

_get_most_frequent_pair: identifies the most frequent adjacent token pair in the sequence. The method returns a tuple containing the pair and its occurrence count. Consider the word"banana", whose token list is[98, 97, 110, 97, 110, 97]. The result would be((97, 110), 2), meaning the pair"an"appears twice._apply_merge: replaces the most frequent pair with a new token. This method takes the token list, the identified pair, and the new token to be created. Using the previous example, given[98, 97, 110, 97, 110, 97], the pair(97, 110), and a new token (e.g.,256), the result becomes[98, 256, 256, 97]._save_vocabulary: handles persistence of the training results by saving the vocabulary (vocabulary.json) and the performed merges (merges.json). Each vocabulary entry contains the token identifier, its corresponding byte sequence, and a textual representation (decoded using latin-1 for visualization), while merges store the combined pairs and the generated tokens. To illustrate, consider again the example of"banana":vocabulary.json: {“256”: {“bytes”: [97, 110], “text”: “an”}}merges.json: {“merges”: [[[97, 110], 256]]}- For simplicity, only the last generated token is shown in the vocabulary, although in practice it contains all tokens created up to that point.

The train method is responsible for effectively building the vocabulary.

- Initially, it takes a text input and converts it into a list of bytes.

- Next, validations are performed based on the

vocabulary_sizeandmin_frequencyparameters defined in the constructor. Since the algorithm starts with a base of 256 possible values (bytes), the vocabulary size cannot be less than or equal to 256. Additionally, the minimum frequency must be at least 2. - After these validations, the algorithm enters a loop, whose stopping criteria depend on the provided parameters:

- If

vocabulary_sizeis defined, the loop continues until the maximum number of allowed merges is reached. - Otherwise, the loop continues as long as there are pairs with frequency greater than or equal to

min_frequency. - The loop also stops if the token list has fewer than 2 elements.

- If

- Within the loop, the algorithm performs the following steps:

- Identifies the most frequent token pair (

_get_most_frequent_pair) - Creates a new token (starting from 256)

- Replaces occurrences of this pair in the token list (

_apply_merge) - Stores the performed merge

- Identifies the most frequent token pair (

- This process is repeated until one of the stopping conditions is met.

- Finally, the

vocabulary.jsonandmerges.jsonfiles are generated with the data built during training.

The encode method is responsible for receiving a text input and returning a list of integers representing the tokens.

- Initially, the text is converted into a list of bytes (tokens). In addition, the

merges.jsonfile is loaded, containing token pairs and their corresponding identifiers. - Next, the algorithm enters a loop that continues as long as there are consecutive token pairs in the sequence that exist in the merges dictionary.

- At each iteration, all possible consecutive pairs (

zip(tokens, tokens[1:])) that were learned during training are identified. - Among these pairs, the one with the smallest identifier (

min) is selected, ensuring that earlier merges are applied before more recent ones. - This is important because later merges may depend on earlier ones. Applying them in the correct order ensures consistency with the training process.

- Once the pair is selected, the merge is applied, producing a new token sequence.

- This process repeats until no more valid pairs are available for merging.

- Finally, the resulting list of tokens is returned.

Finally, the decode method is responsible for receiving a list of tokens and converting it back into text.

- Initially, the vocabulary is loaded from the

vocabulary.jsonfile, where each token is associated with its corresponding byte sequence. - Next, for each token in the list, its corresponding byte sequence is retrieved and concatenated into a single byte sequence (

b"".join(…)). - Finally, this byte sequence is decoded using UTF-8, resulting in the original string. If an error occurs during decoding, the

"replace"strategy is used, substituting invalid byte sequences with the special character � (U+FFFD).

Vocabulary construction

The goal of this section is to train the tokenizer using a dataset. This dataset was generated using ChatGPT and consists of Markdown content about artificial intelligence, made available for download as a .txt file.

The code below demonstrates how to train the tokenizer using the created dataset. Note that a maximum vocabulary size of 1000 tokens has been defined. As a result, the training process will stop once this limit is reached. Feel free to experiment with different vocabulary sizes or, alternatively, configure the training to continue until a minimum pair frequency threshold is reached.

from tokenizer import Tokenizer

with open("dataset.txt", "r", encoding="utf-8") as file:

text = file.read()

tokenizer = Tokenizer(vocabulary_size=1000)

tokenizer.train(text)

To validate the generated vocabulary, the code below demonstrates how to load the vocabulary.json and merges.json files, as well as display the vocabulary size and the total number of merges performed.

import json

with open("vocabulary.json", "r") as file:

data = json.load(file)

vocabulary = {

int(k): bytes(v["bytes"])

for k, v in data.items()

}

with open("merges.json", "r") as file:

data = json.load(file)

merges = {

tuple(pair): token

for pair, token in data["merges"]

}

print(f"Vocabulary Size: {len(vocabulary)}")

print(f"Total Merges: {len(merges)}")

Below are the outputs:

Vocabulary Size: 1000

Total Merges: 744

Results and analysis

The code below demonstrates how the text “Autonomous AI Agent.” is encoded using the previously generated vocabulary. Note that the tokenizer relies on the vocabulary.json and merges.json files produced during training.

from tokenizer import Tokenizer

tokenizer = Tokenizer()

text = "Autonomous AI Agent."

tokens = tokenizer.encode(text)

print(tokens)

The resulting token sequence is:

[65, 701, 258, 616, 46]

To visualize the text associated with each generated token, the code below can be used:

import json

with open("vocabulary.json", "r") as file:

data = json.load(file)

vocabulary = {

int(k): bytes(v["bytes"])

for k, v in data.items()

}

for token in tokens:

byte_seq = vocabulary[token]

text = byte_seq.decode("utf-8", errors="replace")

print(f"{token} -> '{text}'")

The result is as follows:

65 -> 'A'

701 -> 'utonomou'

258 -> 's '

616 -> 'AI Agent'

46 -> '.'

Note that tokens do not necessarily correspond to complete words, but rather to sequences of characters learned based on data frequency, directly reflecting the patterns captured during BPE training.

It is important to note that the more representative the dataset used, the more efficient the resulting tokenization tends to be. Revisiting the example of the text “Autonomous AI Agent.” and its Chinese version “自主人工智能代理。”, when encoding these texts using the generated vocabulary, it can be observed that the token sequence for the Chinese text is significantly longer compared to the English version. This happens because the dataset used during training is entirely in English. As a result, common patterns from this language are learned and represented by more compact tokens, while characters from other languages tend to be represented as byte sequences. This leads to longer token sequences, which can negatively impact representation efficiency, increase computational cost and latency, and ultimately degrade the performance of the language model.

"Autonomous AI Agent." -> [65, 701, 258, 616, 46]

"自主人工智能代理。" -> [232, 135, 170, 228, 184, 187, 228, 186, 186, 229, 183, 165, 230, 153, 186, 232, 131, 189, 228, 187, 163, 231, 144, 134, 227, 128, 130]

Finally, to validate the entire process, the code below encodes the dataset used during training using the encode method and then applies the decode method, verifying whether the reconstructed text matches the original.

with open("dataset.txt", "r", encoding="utf-8") as file:

text = file.read()

tokens = tokenizer.encode(text)

decoded_text = tokenizer.decode(tokens)

print(text == decoded_text)

The result is True, indicating that the encoding and decoding process correctly preserves the original text.

Leave a comment